(TL;DR: user roles at UX manager)

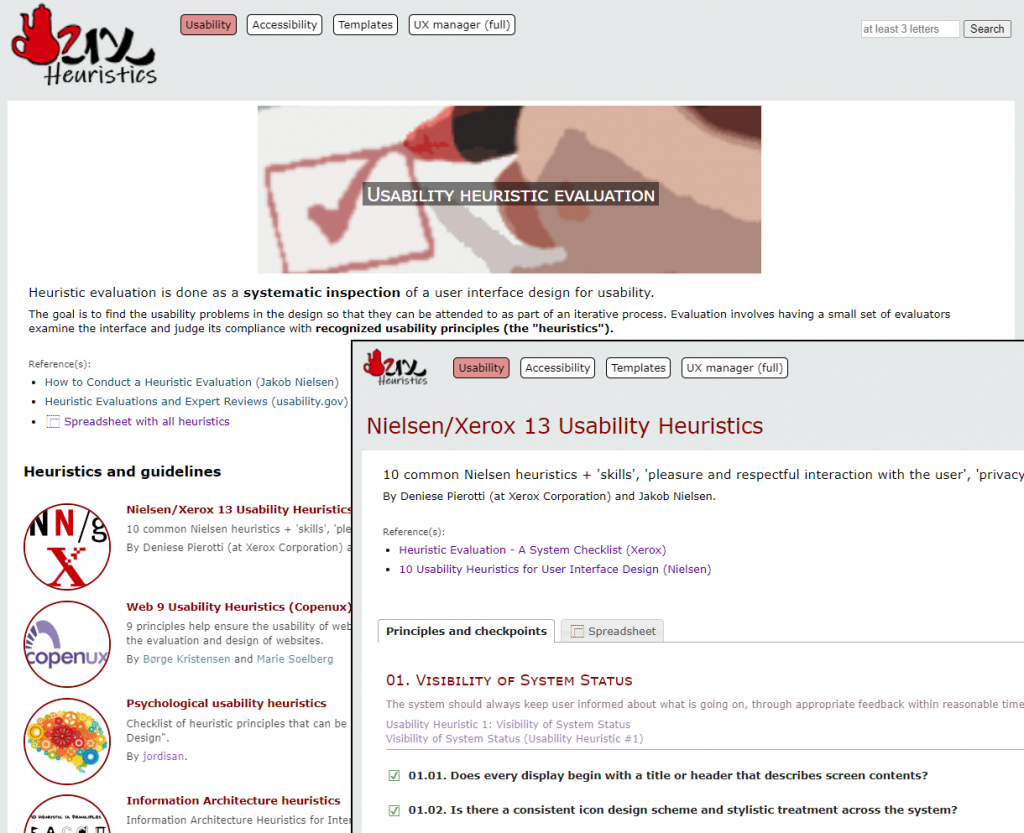

When we talk about usability heuristics, Nielsen always comes to mind; but they are not the only ones. At heuristics.uxmanager.net you have a compilation of usability heuristics, including some for specific cases like Single-Page Applications (SPA) or mobile, and even a set from a psychological point of view. All of them are also available as a spreadsheet so you can use them for your own evaluations.

Besides those heuristics, the website includes a collection of accessibility guidelines including WCAG 2.1, all of them also as spreadsheets. Although they are usually considered as different techniques, in practice both usability heuristic evaluations and accessibility evaluations are similar in essence (i.e., checking if an interface accomplishes a set of principles), and even the specialists that perform them are many times the same.

So we have a compilation of usability heuristics and accessibility guidelines, and even spreadsheets to use them for evaluations; what else can we do with them?